Most teams do not have a tooling problem.

They have an evaluation problem.

A new AI product launches, people test it for fifteen minutes, everyone gets excited by one clever output, and suddenly the team is debating whether to switch workflows. A week later, nobody is using it.

That cycle repeats because many tools are judged on the wrong things: first impressions, feature count, or hype. But in real work, the best AI tool is usually not the one with the longest landing page. It is the one that fits the job, reduces friction, and keeps saving time after the novelty wears off.

This guide shows a practical way to evaluate AI tools quickly without turning every test into a long internal project.

Start with the workflow, not the tool

The most common mistake is evaluating the product before defining the job.

Before you open a demo, answer one question:

What exact task are we trying to improve?

That task should be narrow enough to test clearly. For example:

- summarizing customer calls

- drafting content briefs

- finding sources faster

- cleaning internal notes

- writing first-pass code changes

- answering repeated support questions

A vague goal like “use more AI” leads to vague testing. A specific workflow creates a fair comparison.

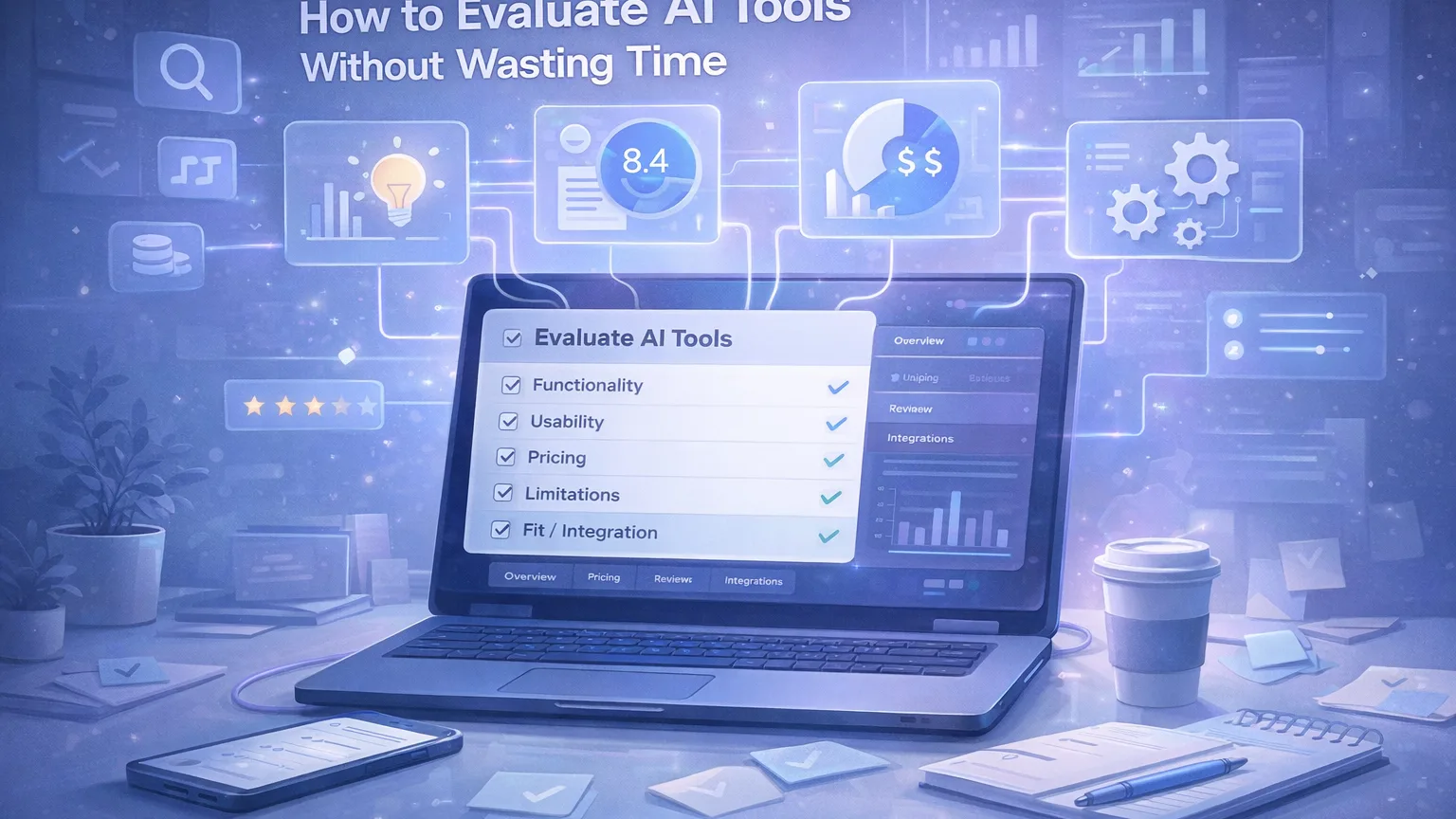

Use a five-part evaluation checklist

A simple evaluation model is enough for most teams. You do not need a giant scorecard.

Use these five criteria:

1. Functionality

Can the tool actually do the job you need?

This sounds obvious, but many AI products are good at adjacent tasks, not the real one.

When testing functionality, ask:

- Can it handle the input format we use?

- Can it produce an output we would actually keep?

- Can it repeat that result more than once?

- Does it fail on common edge cases?

A tool that looks impressive in a perfect demo but breaks on normal inputs is not ready for workflow use.

2. Usability

A tool can be powerful and still be a bad fit.

If people need too many steps, too much setup, or too much prompt babysitting, adoption will be weak.

Check:

- how long it takes to get a usable result

- how much manual cleanup is still required

- whether non-experts can use it consistently

- whether the interface supports repeated daily use

In practice, usability often matters more than raw capability.

3. Pricing

AI tools are easy to justify in theory and expensive in aggregate.

The right question is not just “How much does it cost?” but:

How much does it cost relative to the time or output quality it improves?

Look at:

- monthly seat cost

- usage limits

- hidden upgrade pressure

- whether one tool can replace two smaller ones

- whether the cost still makes sense at team scale

A cheap tool that saves no time is expensive. A pricier tool that removes a weekly bottleneck may be worth it.

4. Limitations

Every AI tool has constraints. The problem is not that limitations exist. The problem is discovering them too late.

Test for:

- output inconsistency

- context limits

- weak formatting control

- poor source quality

- bad collaboration support

- privacy or security concerns

- weak export or integration options

This is where many “great” tools quietly fail.

5. Fit and integration

A strong tool still loses if it does not fit the rest of the system.

Ask:

- Does it fit our existing workflow?

- Does it create extra copy-paste work?

- Can it integrate with the tools we already use?

- Will people actually adopt it without heavy process change?

The best tools usually feel like a workflow improvement, not a workflow replacement.

Run a short real-world test

Do not evaluate with made-up examples.

Pick a real task from the last week and run the tool against that.

A good test is:

- small enough to finish in one sitting

- representative of real work

- easy to compare against the current method

- repeatable by another person on the team

For example, if you are evaluating a research tool, use an actual brief your team needs this week. If you are evaluating a writing assistant, use a real draft. If you are testing an AI coding tool, give it a real bug or repetitive edit.

This immediately removes a lot of false positives.

Compare against the current workflow

A tool should not be judged in isolation.

It should be judged against what you do now.

That means comparing:

- time to first usable result

- amount of editing required

- output quality

- consistency

- ease of handoff to another teammate

Sometimes the outcome is not “this tool is amazing.”

Sometimes the real conclusion is:

- the old workflow is already good enough

- the tool is only useful for first drafts

- the tool is useful for one sub-step, not the whole process

That is still a successful evaluation.

Do not let feature lists decide the outcome

Many teams overvalue broad capability and undervalue repeated usefulness.

A tool with ten advanced features is not automatically better than a tool that solves one task cleanly.

During evaluation, separate:

- interesting features

- useful workflow improvements

The second group matters more.

A good tool earns its place by being used again next week, not by winning the first demo.

Use a simple scoring model

You do not need a complicated framework. A lightweight score is enough.

Example:

| Criteria | Score (1–5) | Notes |

|---|---|---|

| Functionality | Can it do the real task reliably? | |

| Usability | Is it fast and easy to use repeatedly? | |

| Pricing | Does the value justify the cost? | |

| Limitations | Are the tradeoffs acceptable? | |

| Fit / Integration | Does it fit the current workflow? |

Then add one final line:

Would we actually use this every week?

That last question is often more valuable than the score itself.

Know when to stop testing

Many teams waste time evaluating too many tools in the same category.

A good stopping rule is:

- test two or three serious options

- compare them against the existing workflow

- pick one clear winner or decide not to adopt anything yet

If three good tools all perform similarly, the decision usually comes down to fit, cost, or simplicity—not endless additional testing.

A practical evaluation workflow

If you want a repeatable approach, use this order:

- define the exact task

- choose two or three candidate tools

- run a real-world test

- score each tool across the five criteria

- compare against the current workflow

- make a decision quickly

- review again after two weeks of real usage

That final review matters.

Some tools test well but fade in real use. Others look modest at first but become extremely useful once the workflow settles.

Final thoughts

The goal is not to find the “best AI tool” in the abstract.

The goal is to find the right tool for a specific job.

A practical evaluation process helps teams avoid hype, reduce wasted testing time, and make better decisions with less noise.

If a tool improves a real workflow, saves time repeatedly, and fits how the team already works, it is probably good enough to adopt.

That is a better standard than novelty.