Most internal knowledge bases fail for the same reason: they become storage, not support.

Teams create pages, docs, templates, and meeting notes, but very little of it stays useful. Search gets noisy. Important decisions disappear into chat threads. Old instructions sit next to new ones. People stop trusting the system, so they stop using it.

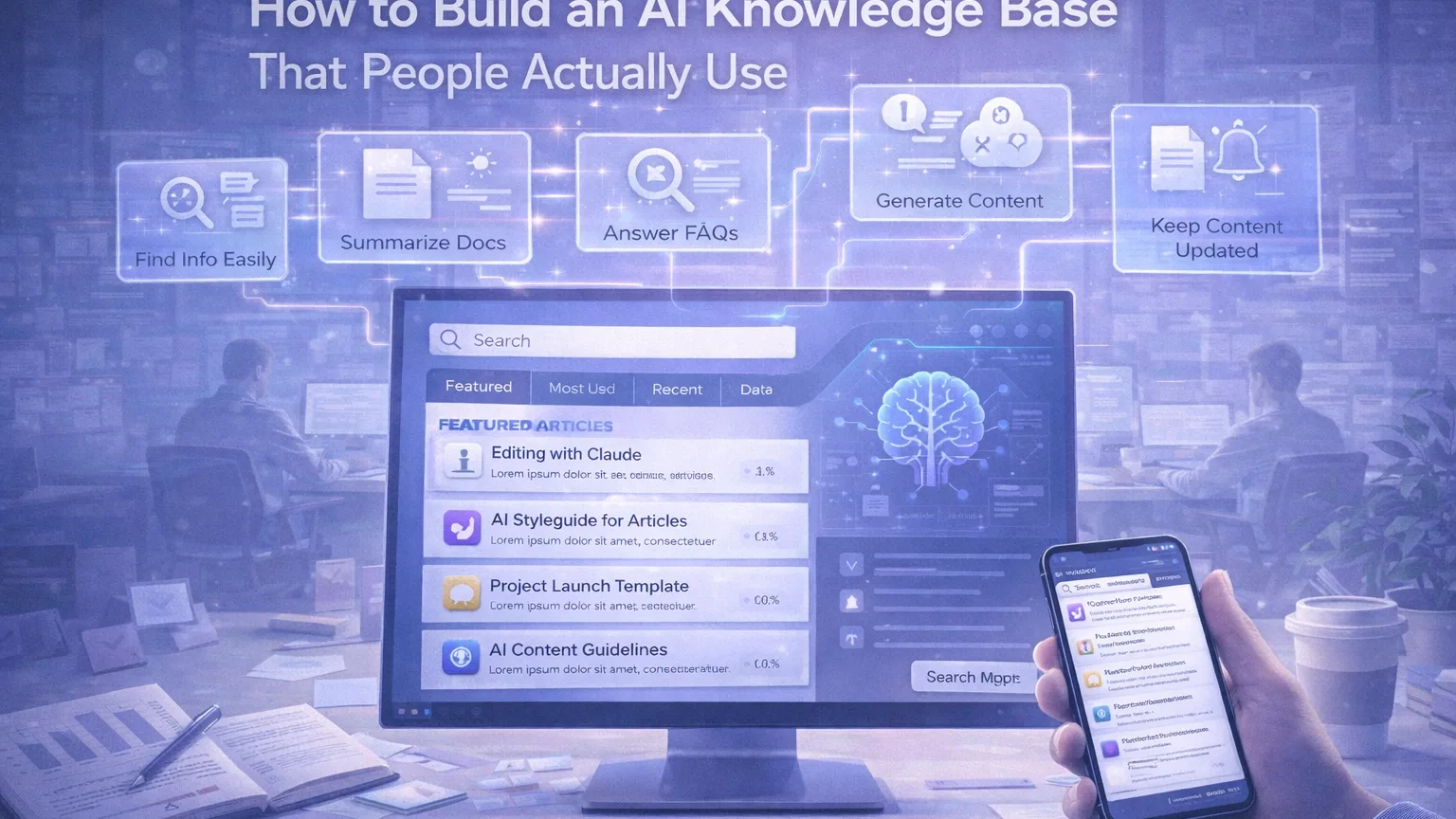

AI can help, but only if the knowledge base is designed around real behavior. The goal is not to dump more content into a smarter search layer. The goal is to make it easier for people to find the right answer, understand the current process, and move forward without asking the same question again.

This guide shows how to build an AI knowledge base that people actually use.

Start with the jobs the system needs to do

A useful knowledge base is not just a document archive. It usually needs to support a few repeatable jobs:

- answer common questions quickly

- explain how a process works

- capture decisions and changes

- make templates easy to reuse

- help new team members get context fast

If the system cannot do those jobs, it does not matter how advanced the AI layer is.

Before choosing tools or building pages, write down the top ten questions people repeatedly ask in your team. That list is usually a better starting point than any document tree.

Build around high-trust content first

Not every document should be treated the same.

The biggest mistake teams make is mixing high-trust operating information with low-trust working notes. For example:

- current onboarding checklist

- approved process docs

- product naming rules

- customer support macros

- legal or policy references

These should not live in the same layer as random brainstorms, outdated meeting notes, or abandoned project pages.

A practical structure is:

1. Source of truth documents

These are the pages people should rely on.

2. Working notes

These are useful, but not canonical.

3. Archived material

These stay searchable if needed, but they should not compete with current guidance.

AI works much better when the system has clear content confidence levels.

Keep the structure simpler than you think

A knowledge base should not feel like a maze.

If people have to guess whether something belongs in “Operations,” “Internal Ops,” “Company Systems,” or “Team Processes,” the structure is already too complicated.

Keep the top-level architecture small. In most teams, something like this is enough:

- Company

- Product

- Marketing

- Sales

- Support

- Operations

- Templates

- Decisions

Inside each section, create a small number of repeatable page types instead of inventing new formats every time.

For example:

- overview

- process

- checklist

- template

- decision log

- FAQ

That makes the system easier for both humans and AI to interpret.

Use AI to summarize, not to invent policy

AI is strongest when it helps people navigate and compress knowledge.

Good use cases:

- summarize a long process page

- generate a short answer from approved docs

- turn meeting notes into action items

- suggest related documents

- rewrite dense internal docs into clearer language

Weak use cases:

- inventing company policy from scratch

- answering from mixed outdated content

- generating process steps without a trusted source

A simple rule helps here:

AI can help explain the system, but it should not silently define the system.

Create better input pages, not just better search

Teams often think the solution is “we need AI search.”

Sometimes the real problem is that the underlying pages are bad.

A page is much more useful to both humans and AI if it includes:

- a clear title

- a short summary at the top

- owner or team

- last updated date

- simple headings

- a small FAQ or key points section

- links to related pages

That structure gives the AI better material to retrieve and summarize. It also helps people decide quickly whether they are on the right page.

Add ownership so the system stays alive

A dead knowledge base is usually an ownership problem.

If nobody is responsible for a section, it will decay.

For each major area, define:

- who owns it

- how often it should be reviewed

- what content should be archived

- what signals indicate it is outdated

Even a lightweight review cadence works well:

- critical operating docs: monthly or quarterly review

- team playbooks: quarterly review

- templates and reference pages: review when workflow changes

AI can help identify stale or duplicated content, but someone still needs to decide what stays authoritative.

Make search and browse work together

A lot of people will search. A lot of people will browse. Your knowledge base has to support both.

Search is good for:

- known questions

- urgent task completion

- quick retrieval

Browse is good for:

- onboarding

- learning a system

- exploring related context

- finding the right starting point

That means you should not rely only on AI chat or only on folders. A strong setup usually includes:

- a searchable knowledge layer

- good section landing pages

- clear “start here” pages

- AI-assisted summaries or Q&A on top of trusted content

Use FAQ pages as access points

Some of the best-performing knowledge base pages are not long manuals. They are short answer pages.

If a team asks the same question repeatedly, create a page that answers it directly.

Examples:

- How do we launch a new content workflow?

- Where do approved product screenshots live?

- Which naming format should we use for AI comparison posts?

- How do we request a new tool review?

These pages are useful because they match real language. They also make AI retrieval better because the wording mirrors how people actually ask questions.

Link decisions, not just documents

A team knowledge base becomes much more useful when it explains why something changed, not just what the current rule is.

That is where decision logs matter.

For major workflows or policies, add a lightweight decision record with:

- what changed

- why it changed

- who approved it

- when it changed

- what old process it replaced

This prevents the classic “I found two conflicting docs” problem.

Measure whether people trust it

A knowledge base is not successful just because it has more pages.

Better questions to ask:

- Are repeated questions going down?

- Can new team members self-serve more often?

- Are people using the search layer successfully?

- Are key process pages getting updated?

- Are old pages being archived instead of accumulating forever?

A few simple qualitative checks go a long way.

Ask people:

- Did this answer your question?

- Did you trust the answer?

- Was the page current?

- What did you still need to ask a person?

Those answers tell you more than vanity metrics.

A practical AI knowledge base workflow

A simple, repeatable setup looks like this:

Step 1. Define your trusted content layer

Identify the documents that should actually power answers.

Step 2. Standardize page templates

Make key pages easier to read, review, and summarize.

Step 3. Separate current docs from notes and archive

Do not let AI treat everything as equally reliable.

Step 4. Add AI support on top

Use AI for summarization, retrieval, suggested answers, and FAQ generation.

Step 5. Review stale content regularly

A smart system still fails if the underlying content is wrong.

Final thoughts

An AI knowledge base only works when people trust it enough to return to it.

That trust does not come from adding more content or installing a chatbot. It comes from clearer structure, cleaner source-of-truth content, better ownership, and AI that helps people move faster without creating more confusion.

If your team is already drowning in documents, the answer is not “more docs.”

It is a better system for finding, maintaining, and explaining the knowledge you already have.