Content audits are easy to postpone because they feel bigger than they should.

Most teams know they have outdated pages, overlapping topics, weak internal links, and articles that no longer match search intent. But when the audit starts, the process becomes messy fast. One spreadsheet turns into five. Pages get reviewed with no clear criteria. The team spends days summarizing problems and still does not know what to fix first.

This is where AI helps.

Not by making decisions for you, but by compressing the slow parts of the audit: summarizing pages, grouping similar content, spotting obvious intent mismatches, and turning a large archive into something a team can actually review.

This guide explains a practical AI content audit workflow for 2026.

What an audit should actually produce

A useful content audit should end with decisions, not just observations.

For each URL, the team should be able to answer:

- should this page be updated

- should it be merged into another page

- should it be redirected or removed

- should its search angle change

- does it need stronger internal links or fresher evidence

If the output is only a long list of notes, the team still has not finished the job.

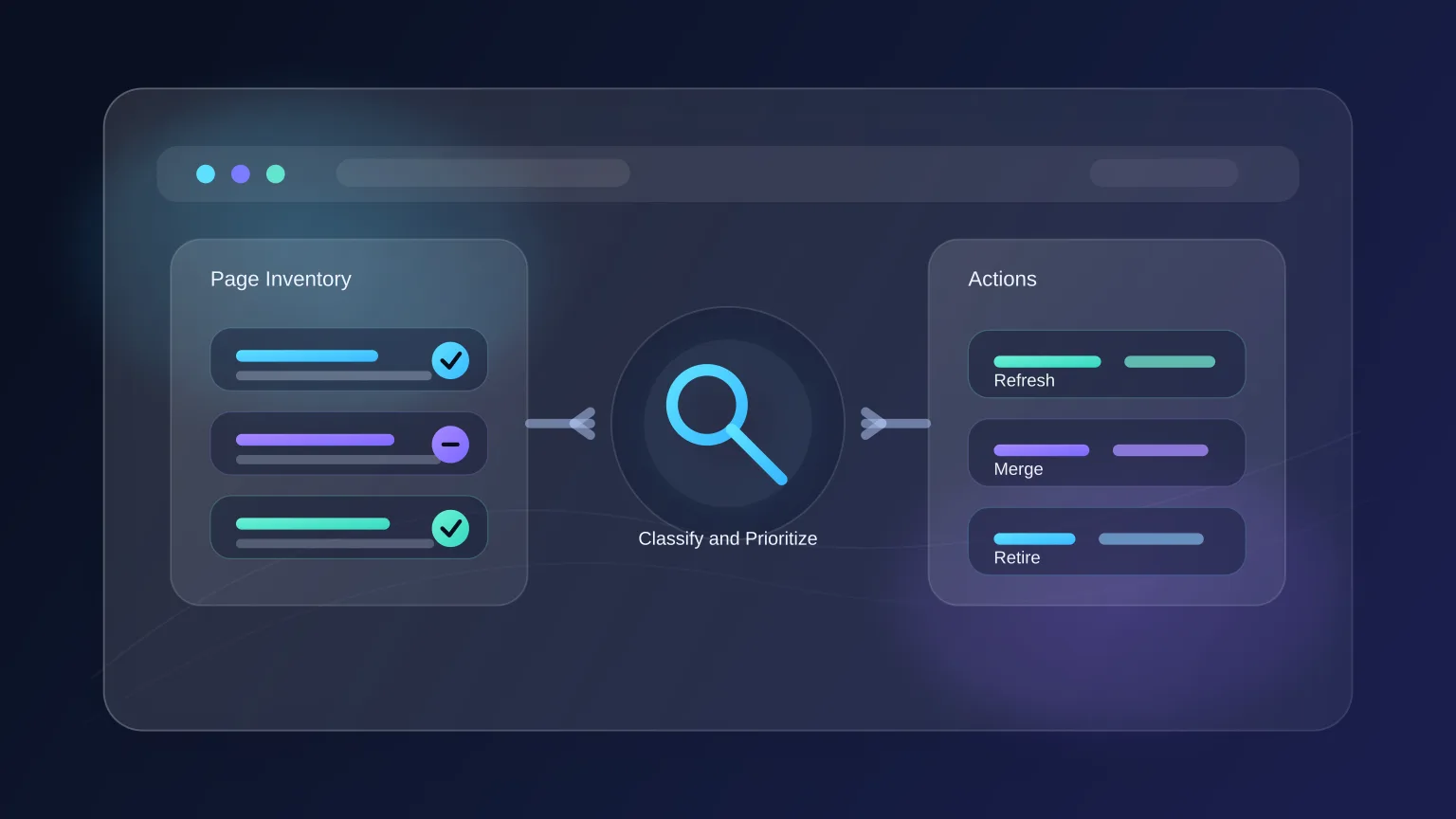

Step 1: Define the audit buckets first

Before using AI, define the decision framework.

For most editorial and SEO teams, five buckets are enough:

- keep as is

- refresh

- expand

- merge

- retire

This matters because AI works better when it is evaluating against a known system. If you ask a model to “review this article,” the feedback usually becomes generic. If you ask it to classify a page against specific audit buckets and explain the reason, the output gets much more useful.

Step 2: Build a page inventory with the right fields

Start with a structured sheet or table. At minimum, include:

- URL

- title

- primary topic or target query

- publish or update date

- page type

- traffic or business importance

- notes

- recommended action

The goal is not to create the perfect database. The goal is to give AI enough context to compare pages consistently.

If performance data exists, add it. If it does not, do not let missing analytics stop the audit. A weak page is often visibly weak even before performance numbers enter the picture.

Step 3: Use AI to summarize each page quickly

This is one of the highest-leverage parts of the workflow.

Instead of rereading every article in full, use AI to produce a compact summary for each page:

- what the page covers

- who it appears to be for

- what the main promise is

- what is missing

- whether the piece feels introductory, mid-funnel, or highly specific

That summary gives the human reviewer a faster starting point. It also helps when multiple people are involved and need a consistent view of the archive.

The important rule is simple: summarize first, judge second.

If you skip that separation, teams tend to make decisions too early based on titles or vague memory.

Step 4: Look for overlap before looking for quality

A lot of content audits fail because teams review each page in isolation.

That misses one of the biggest problems in most archives: topic overlap.

AI is useful here because it can compare multiple pages and surface patterns such as:

- two articles chasing the same intent with slightly different titles

- several thin posts that should become one stronger page

- pages that are internally competing because the angle is unclear

- older posts that now belong as sections inside a newer guide

This is especially important for SEO. Many archives do not have a “low-quality content” problem so much as a “fragmented topic coverage” problem.

Step 5: Check intent drift

Even good articles decay when search intent changes.

A page that used to rank because it answered a broad question may now underperform because search results have become more specific, more commercial, or more comparison-oriented.

AI can help spot intent drift by reviewing:

- the article’s current angle

- the implied user goal

- the type of SERP the query likely maps to

- whether the page matches an informational, comparison, workflow, or tool-selection intent

This should not replace a manual SERP review. It should narrow down which pages need that review first.

Step 6: Score pages with a simple rubric

Once summaries and overlap checks exist, score pages against a lightweight rubric. Keep it simple enough that anyone on the team can use it.

One practical rubric:

- relevance to current search intent

- depth and completeness

- freshness of examples and claims

- internal-link value

- business or strategic importance

You do not need a complex weighted framework unless the archive is very large. Most teams benefit more from consistency than from sophistication.

Step 7: Turn the audit into a production queue

This is the step teams skip most often.

An audit becomes useful only when it feeds the editorial workflow. That means every page with an action should move into one of a few queues:

- quick refreshes

- major rewrites

- merge projects

- redirect removals

- net-new replacement content

AI can help draft the first-pass rewrite brief for each page. That brief should include:

- current problem

- target angle

- sections to keep

- sections to cut

- related pages to link

- source gaps to fill

At that point, the audit stops being a spreadsheet exercise and becomes operational.

Where AI helps most and where it does not

AI is strongest in content audits when the work is repetitive and comparative.

Good uses:

- summarizing articles at scale

- extracting likely audience and intent

- grouping overlapping pages

- drafting update briefs

- turning messy notes into action lists

Weak uses:

- making final keep/merge/remove decisions without context

- judging factual accuracy without source review

- deciding strategy without business priorities

The human team still needs to decide what matters. AI just reduces the cost of getting to that decision.

A practical operating rhythm

Most teams do not need a giant annual audit.

A more useful rhythm is:

- monthly mini-audits for the newest cluster

- quarterly audits for core evergreen pages

- full archive review only when the site changes direction

That keeps the backlog manageable and prevents content decay from becoming a huge cleanup project later.

Final takeaway

The best AI content audit workflow does not try to automate judgment. It automates the slow setup work around judgment.

When the team has clear audit buckets, structured page summaries, overlap detection, and a direct path from findings to production, audits stop being painful.

They become part of normal content operations.